TL;DR

Gitcoin conducted a predictive funding experiment during GG23 where data scientists forecasted how $1.5M in grants would be distributed. Eleven models were submitted within a 36-hour window, with the winning entry achieving an error score of 0.016—substantially better than random prediction (~0.04). The initiative demonstrated that predictive modeling and prediction markets can enhance grant scalability, detect anomalies, and engage technical communities.

Background

In early 2025, Gitcoin launched a data science competition inviting model builders to predict GG23 funding allocations before the round commenced. A $10,000 prize pool, jointly funded by Gitcoin and the Ethereum Foundation Unblocking Pilots team, tested whether models could reliably estimate funding outcomes. Potential benefits included engaging data science communities, surfacing anomalous results indicating manipulation, and enabling "shadow rounds" using previous winning models for new project evaluation.

Recap

The application deadline was March 31st, 2025, with the round opening April 2nd—creating a 36-hour prediction window. Prize distribution: $5,000 first place, $3,000 second, $2,000 third. Mandatory model writeups were required for prize consideration.

Results

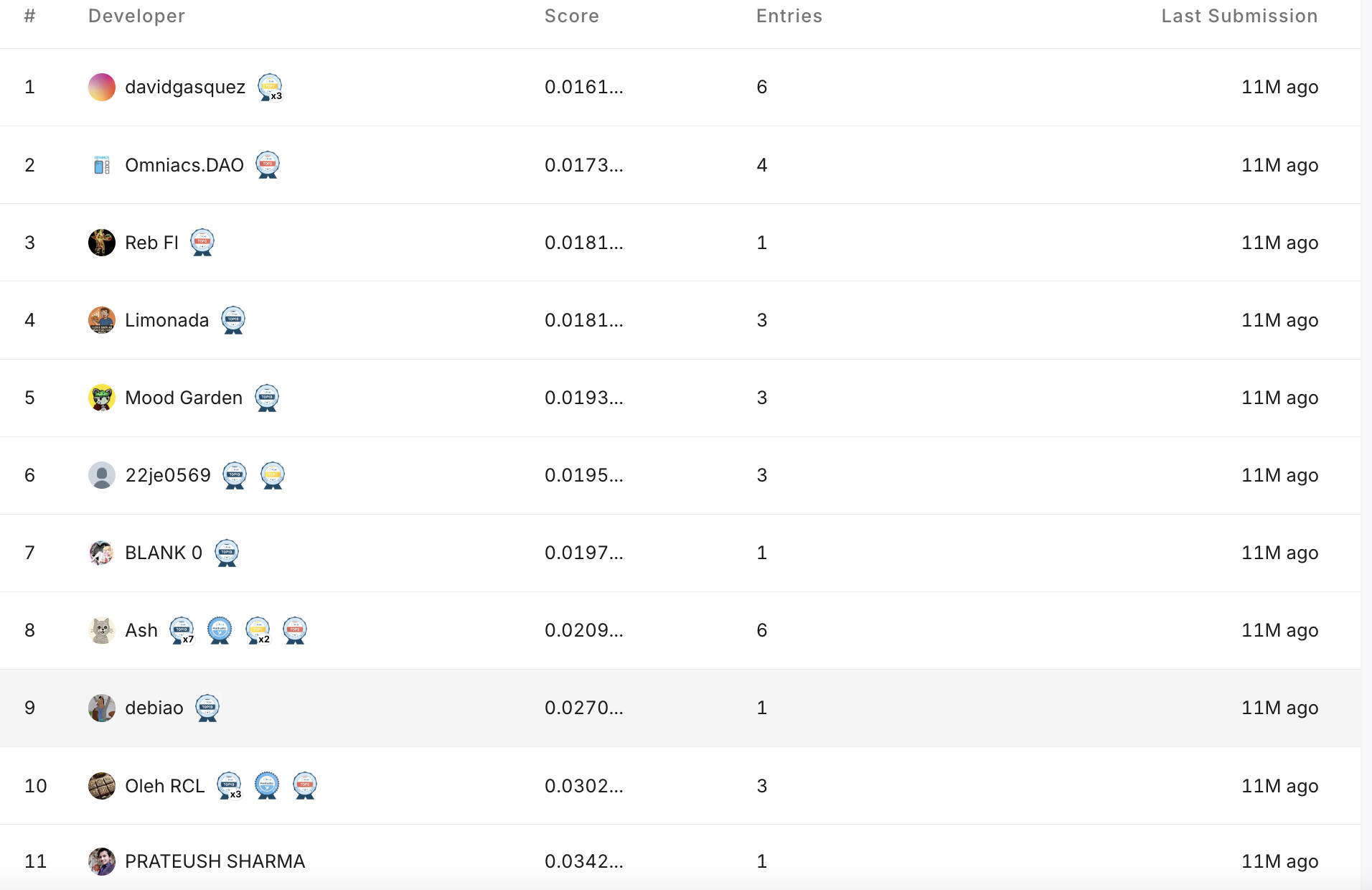

Participation: Eleven valid submissions received, with seven providing model documentation.

Performance: Evaluated using root mean squared error (RMSE), lower scores indicate accuracy:

- 1st: David Gasquez—0.016

- 2nd: Omniacs DAO—0.0173

- 3rd: Limonada—0.018

Random models score approximately 0.04. The lowest-ranked submission scored 0.034, showing significant variance between top performers.

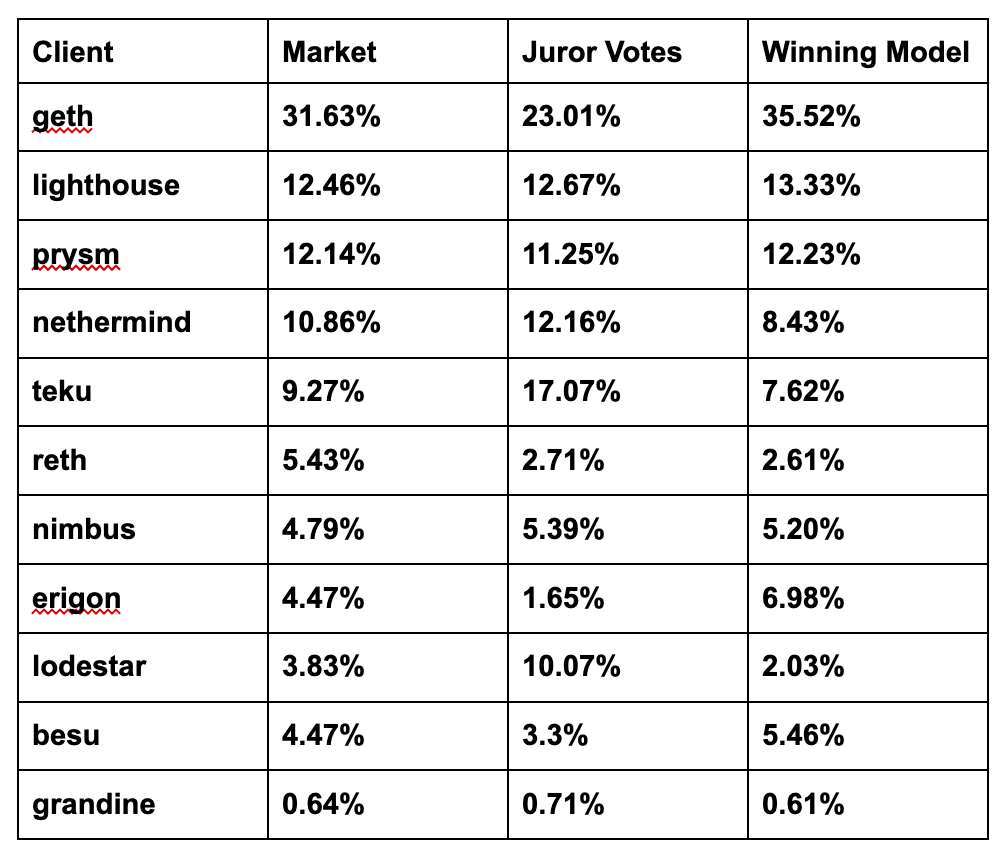

Information Surfacing: Winning models employed XGBoost algorithms with feature engineering, emphasizing contributor counts, per-contributor amounts from previous rounds, and project sentiment scores.

Takeaways

Three recommendations emerged:

-

Scalability: Predictive competitions reduce grant administration costs by generating reusable models for future rounds.

-

Collusion Resistance: Independent allocation lists provide fallback options if fraud detection suggests manipulation, deterring sybil attacks since attackers cannot guarantee their efforts translate to funding.

-

Markets: Concurrent prediction markets attract traders while surfacing collective expectations and providing additional allocation signals.

Limitations

Implementation requires: publicly disclosed funding results, sufficient time between eligibility and allocations for modeling, and clear frameworks preventing operator bias in list selection.

What's Next

Future grant rounds should integrate data science contests and prediction markets. Gitcoin's business opportunity involves becoming a platform where tested models are queried by funders for allocation guidance.